ADocumentation Index

Fetch the complete documentation index at: https://docs.syntblaze.com/llms.txt

Use this file to discover all available pages before exploring further.

signed char is a fundamental integer data type in C that occupies exactly one byte of memory and is explicitly designated to represent signed numerical values. It is the smallest addressable signed integer type in the C language.

Technical Specifications

- Size: Guaranteed to be exactly 1 byte. The number of bits in this byte is defined by the

CHAR_BITmacro in<limits.h>(universally 8 bits on modern architectures). - Memory Representation: The Most Significant Bit (MSB) acts as the sign bit. Modern C implementations utilize two’s complement representation for negative values.

- Value Range:

- The C Standard guarantees a minimum range of

-127to127. - On standard 8-bit two’s complement systems, the exact range is

-128to127. - These limits are exposed via the

SCHAR_MINandSCHAR_MAXmacros in<limits.h>.

- The C Standard guarantees a minimum range of

Type Distinctness

In the C type system,char, signed char, and unsigned char are three mutually exclusive, distinct types.

Even if a specific compiler architecture defaults the standard char type to behave as a signed integer, char and signed char remain distinct types. Consequently, a pointer to char (char *) is not strictly compatible with a pointer to signed char (signed char *) without an explicit cast.

Syntax and Initialization

Format Specifiers and I/O

When interacting with standard I/O functions likeprintf or scanf, formatting a signed char requires precise combinations of length modifiers and conversion specifiers:

printfand%hhd: Becauseprintfis a variadic function, asigned charargument is subject to default argument promotions and is always passed as anint. In the format string%hhd,dis the conversion specifier for a signed decimal integer, andhhis the length modifier. Thehhmodifier instructsprintfto take the promotedintargument and convert it back to asigned charbefore formatting.scanfand%hhd: To read a numeric value directly into asigned charvariable, use%hhd. This correctly expects a pointer of typesigned char *.- The

%cConversion Specifier: Thecconversion specifier is used for character data. While passing asigned chartoprintf("%c", val)is valid (due to promotion toint), using%cwithscanfstrictly requires achar *argument. Passing a pointer to asigned char(signed char *) toscanfwith%cresults in a pointer type mismatch and will generate compiler warnings (e.g.,-Wformat).

Integer Promotion

When asigned char is used in an arithmetic expression, it is subject to integer promotion. Before the operation is evaluated, the signed char is implicitly promoted to an int (preserving its sign and value). The result of the arithmetic operation will be of type int unless explicitly cast back to signed char.

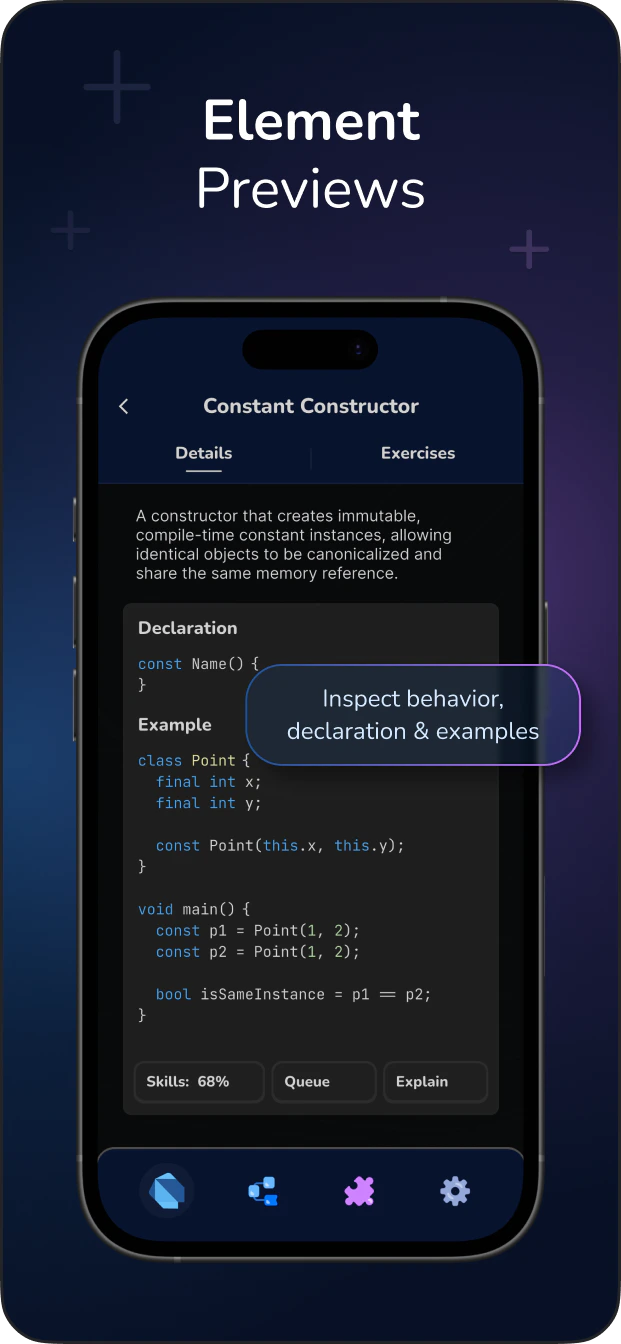

Master C with Deep Grasping Methodology!Learn More